Recently some colleagues shared an interesting documentary video (embedded above, from 3:00 onwards) about how the Himba perceive colors, and how language plays an important role in shaping color perception. It seems intuitive to us that visual perception, especially pre-attentive processing, is hard-wired in the brain. That is, endowed with the same neurological machinery, every one of us perceives colors in the same way. In short, your red is my red, and what I see is what you see.

This view is sometimes characterized as universalism. An opposing view called relativism also exists, and it claims that higher-level conceptual system shapes our perception. An extreme form of relativism argues that since color categories can vary across languages without constraints, human perception of color can thus differ culturally in arbitrary ways. Relativism can be traced back to the Sapir-Whorf hypothesis.

Which view is correct? The empirical evidence included in the video seems to suggest that relativism wins. The universalist stance, however, is not to be dismissed so easily. It is reasonable to assume that natural language evolves later than the ability to perceive color (although it may be argued that such ability continues to develop with the development of language). Why does a language have the specific color categories? Where do these categories come from? In Basic Color Terms by Berlin and Kay (1969), they identified 11 basic color terms that are universal. In English, these terms correspond to the color categories of black, white, red, yellow, green, blue, brown, purple, pink, orange and gray. Of course, not all cultures have color categories for all the 11 basic terms. Some cultures have only two or three color terms. Nevertheless,there seems to be a strict sequential order in which basic color terms are added as languages evolve. When a language has only two basic color terms, they are black and white. When a language has three basic color terms, the third one will be red. Berlin and Kay eventually proposed the following sequence:

black, white

red

yellow, blue, green

brown

purple, pink, orange, gray

It seems then that somehow there is a universal factor (most probably neurological) that determines the evolution of linguistic categories for color. Berlin and Kay’s work has been recently challenged and the whole universalism/relativism debate continues. Much new evidence has arisen, which can be interpreted to support either universalism (e.g. a series of studies by Eleanor Rosch) or relativism (e.g. the work by Debi Roberson). No final conclusion has been made yet. The real relationship between color perception and language is probably not just a simple matter of one influencing another. It is possible that both universalism and relativism are at the same time partly correct and partly wrong. As D’Andrade (1995) has put nicely:

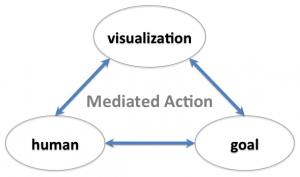

“The argument here is not that of extreme cultural constructionism, which argues that everything is determined by culture, nor that of psychological reductionism, which argues that all culture is determined by the nature of the human psyche. The position taken here is that of interactionism, which hypothesizes that culture and psychology mutually affect each other. The problem, as Richard Shweder (1993:500) says, is to determine ‘how culture and psyche make each other up.’ “

Subscribe

Subscribe